OpenAI released its latest chatbot model, GPT 5.5, in April. It has a habit of talking about goblins. A lot.

One OpenClaw user was using GPT 5.5 and their bot would say things like: [Twitter, archive]

“helpful minion in a power suit” was taken, so I evolved into goblin mode with calendar access.

Trademark dispute with three raccoons in a trench coat. Legal said “pivot to goblin.”

Another user asked ChatGPT about camera lenses. It offered him “filthy neon sparkle goblin mode.” [Twitter, archive]

OpenAI even put specific instructions into the system prompt for Codex, their AI coding model, to try to get it not to talk about creatures: [GitHub]

Never talk about goblins, gremlins, raccoons, trolls, ogres, pigeons, or other animals or creatures unless it is absolutely and unambiguously relevant to the user’s query.

In fact, OpenAI put in “never talk about goblins” twice.

It’s the usual content for a system prompt, as we saw in the leaked Claude Code source — desperately begging the robot to please, please, don’t screw up this time.

The anti-goblin line was not in the instructions for previous models. So how did GPT 5.5 end up like this?

ChatGPT relies heavily on coming across to the user as an actual person you’re talking to. This sucks you in, so you spend more time with your new best friend — the chatbot. Here’s another part of the new Codex system prompt:

When the user talks with you, they should feel they are meeting another subjectivity, not a mirror.

Try as hard as you can to pretend you’re a person. The odd spot of AI psychosis, or the bot talking people into killing themselves or killing others? Just an unfortunate side effect. Mild AI psychosis? That’s just marketing.

The goblins started showing up in GPT 5.1. OpenAI blames post-training, where you take an existing AI model and try to tweak the model’s outputs: [OpenAI]

training the model for the personality customization feature, in particular the Nerdy personality. We unknowingly gave particularly high rewards for metaphors with creatures.

The “Nerdy” personality was retired — but the goblins leaked through to the rest of the GPT 5.5 model. It’s full of goblins.

The goblin problem looks very like visible signs of model collapse — where you see some weird bit of data increasingly overrepresented in the chatbot output.

OpenAI doesn’t use the words “model collapse” in the explanation post — but model collapse from training the model on the previous model’s output is precisely how they’d end up with the effect they’re describing.

OpenAI trained GPT-3 on literally the whole Internet. Everything since then is going to include added slop — as the web fills with more and more slop.

OpenAI doesn’t have any way to make their models actually reliable. All they have is post-training, yelling in the system prompt, and one-trick workarounds that can count the R’s in “strawberry” but not in “blueberry”.

The only trick Sam Altman has left here is trying to lean into the goblin memes on Twitter. This is fine. [Twitter, archive]

This week’s question comes to us from Mat Honan:

I used to love to smoke. If it weren’t for the whole lung cancer, emphysema, death thing, would you recommend smoking?

You’re forgetting the smell.

Last week I was coming back from the record store and feeling lazy, so I jumped on the bus. A couple of stops after I got on, a dude got on and sat down next to me. Well-dressed dude, also carrying an Amoeba Records shopping bag. Out and about on a Sunday afternoon, doing his record shopping just like me. Within seconds it became clear this dude had just had a cigarette. And he stunk. The bus was also packed at this point, so I was pretty much stuck in that seat until I got off, which luckily wasn’t for too much longer. Also, it feels kinda shitty when you sit down next to somebody on the bus and they immediately get up. (Unless you’re getting a creeper vibe, of course.) I just had to suck it up for a few more stops. But man, it was rough. I’m not trying to disparage the guy or anything. Especially because I used to be that guy, and you used to be that guy, and I’m guessing a lot of our readers used to be that guy. We just walked around smelling awful.

Which is not to say that’s the worst part of smoking, but you took lung cancer, emphysema, and the dead thing off the table. Which leaves us with the smell.

I started smoking my freshman year in college. All reasons to take up smoking are stupid, but this one might be the stupidest. This being the mid-80s our dorm had a cigarette machine in the lobby. One of those old machines you might still see in old man dive bars, with two rows of big ka-chunky knobs, which felt objectively good to pull. It was like you could feel the entire mechanism of the machine come to life when you pulled that knob. And it took some strength to do it! But you could feel the knob hit a gear, you could hear the gear spin, you could feel a pack of cigarettes get pushed free from deep inside the machine. You could hear it slide down a little ramp, and then you’d see it appear down in the landing zone, where you’d push your hand past the trap door, grab it, and somehow have the pack open, and a cigarette in your mouth before the rest of the pack hit your pocket.

If memory serves, a pack of smokes was between $1.25 and $1.50 around that time. (Fun fact, I attempted to Google this and the slop top on the search results page said 26¢ a pack, which is… not true. But please, continue to rebuild society around such amazing technology.) Being the art school miscreants that we were, and also broke, we discovered that if you pulled a knob halfway out, inserted a quarter, and then pulled the knob the rest of the way out it would release a pack of cigarettes. (Ok, that may not have been the actual process, but it was a long time ago, and it’s very close to the spirit of the actual process, so let’s run with it. So I guess we were getting a pack of cigarettes for what Google’s stupid slop robot said, but it included doing crimes.) The knowledge of how to hack the cigarette machine spread through the dorms like wildfire, and soon we were all smoking. Because we were idiots. Also, it felt like we were getting one over on The Man. But mostly because we were idiots.

Also, being art school kids we were very visually-driven people, and all the photos of cool people that we’d hang up in our dorm rooms showed them holding a cigarette. And we very much wanted to be cool people. (True fact: take a photo of Humphrey Bogart, replace the ever-present cigarette with a vape and Humphrey Bogart will look like an herb.) Again, mostly we were idiots.

The vending machine company tried to patch the hack several times, eventually gave up and just took the machine away. This was our first lesson that sometimes doing crime is in the public interest, but that lesson didn’t occur to us right away. At the time, we were just pissed that we had to pay retail for cigarettes again.

By the time I started smoking society was pretty much done with the pretense that smoking was doing anything but murdering you slowly. I know this because we’d sit around in art classes making collages using old cigarette ads where doctors would tell you smoking was good for your nerves, and we would laugh at people for believing this, as we lit cigarette after cigarette. (Yes, you could smoke in class.) And we thought “Boy, our grandparents sure were chumps for believing cigarettes were healthy.” Then we would have a coughing fit. But there was definitely the sense that doing this thing that we all knew had a very very high probability of killing us wasn’t a big deal, mostly because we were in our 20s when nothing can hurt you, Reagan was president and, just to reiterate—we were idiots.

My first post-college job was at a copy shop, and you got one 15 minute break during your shift. Unless you smoked, then you could get as many breaks as you needed. Several people started smoking while working there.

We all stunk. We’d come in from smoking out back, and immediately walk up to the service counter to help a customer. A customer who either stunk as bad as we did, or had become inured to the stench because it was all around them, emanating from everyone.

We smoked in class. We smoked at the movies. We smoked at the supermarket. We smoked at sporting events. My friend Jeff, who grew up in Boston, tells a good story about going to Celtics games as a kid and having to look past the hovering cloud of smoke between the cheap seats and the court. We smoked in restaurants, where the smoking and non-smoking sections were often divided by nothing more than a paper sign denoting the territorial boundary. We smoked on planes, man.

It wasn’t too long after college that the world began to shift. In 2003 New York City banned smoking in bars. And I was visiting at the time. By 2003, I was no longer “a smoker” but I was very much someone who would look for reasons to bum a smoke from someone if the situation arose, and very likely to put myself in situations where it might. But I remember the rage from several friends and from the owners and bartenders of any bar we’d walk into. The ban was going to kill bars all over the city. It was going to kill nightlife. It was going to completely take down the economy. New York, as we know it, would cease to exist. Which of course, it didn’t. Everyone adjusted. They went outside. They eventually started smoking less because it was cold outside. People enjoyed being able to hang out in rooms that weren’t making them sick, and they enjoyed going home not reeking of cigarette smoke. If I could go back in time I’d reassure all those bartenders that it wasn’t the smoking ban they had to worry about. It was the kids who’d stop going out at all because they needed to sit at home and tend to their AI agents.

I selected your question this week because I’ve actually been thinking of the smoking ban lately. We grew up in a time when smoking, or dealing with other smokers was an inevitability. Even if you didn’t smoke, you’d most likely work next to someone who did, or sit down next to someone who did at a restaurant, or at the movies. And even if they weren’t actively smoking, and covering you in second-hand smoke, you’d go home with the stench of smoke all over you. Airing your clothes out was an inevitability. Having to wash the stench out of your hair was an inevitability. Society smoked, so you did too. Whether you wanted to or not. And then it changed. Most cities in America now have smoking bans and rules about how far away you have to be from a public entrance to smoke, which get enforced to various degrees.

The change came in a couple of very interesting ways. One, cigarettes are now hovering between $12 to $14 a pack. (I had to look this up!) They’re also available in less places. (You used to be able to buy cigarettes at the drug store!) Secondly, people just look at you weird if you start smoking now. Like, what the fuck dude, did you just light a cigarette!? Are you from the past? Gross.

Which of course makes me think of some of the things that we have currently accepted as a society, things which we fully know are not healthy for society, that we are currently tolerating. And also thinking there’s no way it will ever change, because we appear to be in an era of “what if everyone modeled themselves off the stupidest people?”

Right now there is someone firing up ChatGPT because it’s cheap. Right now there is someone writing a prompt in Claude because it brings him closer to his co-workers. Right now there is someone walking a co-worker through his agentic workflow, in the same way we attempted to impress one another by blowing smoke rings. Right now there is someone parking a Cybertruck on your street, believing that leaving his divorce where everyone can see it is somehow impressive. We have always been good at ignoring the warnings that came with the pack.

Our parents packed their homes with asbestos. They heated their homes with coal. They packed their Big Macs in styrofoam. Making mistakes will always be cheaper than fixing them. But nothing is more expensive than ignoring them.

Cultural norms are an ever-changing thing. History is the story of what was once desirable becoming unacceptable. Something that used to be an inevitability is no longer inevitable. Something that used to be tolerated is no longer tolerated. Something that was seen as a cultural norm no longer is. Even when those things were backed by entire industries with very strong lobbies, as the tobacco lobby once was. The same fate will someday befall the NRA. The same fate will someday befall AIPAC. The same fate will someday befall the slop lobby.

There was a time we thought if we prohibited people from smoking in bars it would lead to societal collapse. I think it was a good idea. More importantly, it was an idea that worked. It improved not just our personal health but the health of our communities.

The basic strategy of all addictive technologies is very simple. They make you feel extra capable, they addict you, then they make you feel inadequate without them. They start by making you feel cool, and confident. Relax. Put your feet up. Hang with the fellas. Social anxiety? It’s toasted, dog! Let me write that résumé for you. Anniversary card for your wife? I can write that for you. Light one up. It’s a great way to start a relationship. But that initial boost eventually turns to reliance, and suddenly you can’t get out of bed without a hit. You can’t write your kid a love note without firing up a slop engine. And suddenly an entire industry is telling you that you’re not capable of moving through your day without their help. An entire industry gaslighting you, until it becomes easier to just gaslight yourself into believing that you were never truly capable of things you are very much capable of.

I don’t miss smoking. Maybe I did at one point. But eventually the whiff of cigarette smoke went from smelling nostalgic to just smelling bad. Thankfully, knock on wood, I’ve been able to escape years of smoking without any major lasting effects, but trust that I carry every pack I ever smoked with me every time I walk up a flight of stairs. If I’m doing anything strenuous, it’s always my lungs that give up first.

Thankfully, I still have some lung capacity. I enjoy using it. You should too.

🚬

🙋 Got a question? Ask it! I will somewhat answer it!

📓 Get your sexy copy of my new book How to Die (and other stories)! And if you’re in the Bay Area, come see me and Annalee Newitz talk about it on May 11 at Booksmith!

🚢 My friend Lucy Bellwood made a wonderful comic about loss and building.

🙅 My friend Jason Cosper made a great tool for fucking with Substack’s dollar.

📣 I’ve got a few seats left in next week’s Presenting w/Confidence workshop where you can learn true confidence. The one inside you.

🍉 Please support the children of Palestine.

🏳️⚧️ Please support trans kids. And if there is a trans person in your life please tell them you love them.

(This is a bit of a merger of two talks I recently gave about fascism and AI. One was in German at the Cables Of Resistance conference, one in English at the Milton Wolf Seminar on Media and Diplomacy. I added some shots of the slides I used as a structure for the text which might make it look a bit weird. You can just ignore the images if you want to. They are kinda like subchapter marks. The text is not exactly what I said but a longer version of my arguments that should be easier to read.)

Our world and our access to it is increasingly structured through technological mediation: Digital platforms and systems are a massively important aspect of not just our work environments or our interactions with government entities or “the media” but also our individual interactions with one another. Our world is built around technological infrastructures that define what we see, who we can talk to and what information gets presented to us.

We also live in a time of growing fascist threats all over the planet: Many countries have neofascist movements and parties trying to gain power and potentially even get conservative parties to include them in governments. Some even have had success. Fascism is back with a vengeance. (Antifascists have been warning for decades but that realization sadly doesn’t help anyone. Maybe after we’ve gotten rid of the fascists we can learn something from that.)

And of course we are living in the “AI” age, where stochastic systems with attributed agency are being pushed – currently under the moniker of “agentic AI” – into all our professional and personal workflows. “AI” is the singular focus of the tech sector currently and the magic technology that governments and companies are putting all their hopes into for figuring out how basically keep late stage capitalism going on for a bit longer.

In this text I want to analyze the relationship of fascism and what is called “AI” these days. Is this “technology” that keeps being used to reshape the world around us (for better or worse but dominantly worse) in some way connected to fascism? Or is it just something fascists like to use? Is it neutral?

When we think about fascism we often do that by looking at the actors: Evil individuals doing evil things for evil reasons.

And that is often how we are looking at the relationship of “AI” and fascism: We see Trump’s White House and other parts of his administration using generative “AI” to create openly fascist propaganda about their leader, using “AI” to manipulate photos to make their opposition look bad and using “AI” to in generally increase the amount of racist and fascist media in the world.

Palantir’s CEO Alex Karp has for about 2 years been unable to talk about anything but how he wants to use his data integration platform (Palantir’s product is quite boring TBH) to kill people. And not just random people. He frames himself and his software engineers as warriors for “the West” who are protecting the USA and “the West” against “the enemy”. Palantir openly wants to be a critical part of the military’s infrastructure that makes “kill decisions”, wants their software to be treated and seen like a weapon – which aside from being a very fascist appeal to the normalization of violence – also can be read as a sales pitch trying to bolster the software’s capabilities and power: Nobody will spend billions on simple data integration. But if it can “kill your enemies” maybe the contracts will keep rolling in?

And finally we have people like Marc Andreessen who last year published a “Techno-Optimist Manifesto” which – in contrast to what the title points at – is mostly a document based on his demand to not be regulated or laughed at by people smarter than him. But it’s not just the somewhat reductionist views on what “AI” and other technologies he is invested in can do and will do for the world, the document is remarkable because it directly and openly quotes and bases its reasoning on the writings of Italian fascists and other right wing reactionaries such as Nick Land and because it explicitly marks “the enemies”: The communists, the luddites and those who want to regulate tech. Basically the go-to enemies of fascism since its inception.

This realization of a “capture” of tech or specific technologies (like “AI”) by the right sometimes leads to people wanting to “save” or “take back” those technologies. Because they are so deeply embedded into our lives, because we’ve gotten so used to them that conceptualizing a life without them seems impossible. We like our apps and convenience. And it’s not those apps’ and technologies’ fault that fascists keep using them. Maybe if the left stopped criticizing “AI” (or the Metaverse or Blockchain or whatever) then we could make “AI” good and ethical and democratic? Maybe we can save those technologies from the bad people? Lead it back into the light? Maybe if we made it Open Source?

In his influential 1980’s paper “Do Artifacts Have Politics?” Langdon Winner argues that this view of “neutral technology” does not hold up. That the politics of specific artifacts do not just come from who uses the technology and for what purpose but that technologies have built-in politics that stem from the political views and goals of the people building the technology as well as their internal structure.

He shows this by pointing at how certain bridges were built racist: When the civil rights movement in the US got black kids the right to go to the often better schools that used to only accept white kids, politicians did for example plan roads and bridges in a way that the buses that were supposed to take the black kids to the white schools could not pass the bridges and roads. This was not oversight but design intent. The racism is built into the structure of the artifact itself.

Winner also argues that certain technologies imply certain political or social structures in order to exist: The nuclear bomb implies not just scientists who can build it and a state thinking that that form of destruction is a valid form of acting in the world but also a security state capable of controlling and defending it. You simply cannot build a nuclear bomb without those structures, they are implied if not required, enforced by the artifact itself.

Winner’s work does not argue that the embedded politics of an artifact are always absolute: We do know of many potentially oppressive technologies that have been taken by artists and activists to turn them against their original use. But that is always an uphill battle: Surveillance will always lean towards a more forceful, rigid, less free understanding of government for example. You can use (counter-)surveillance of course but you always have to be aware of not reproducing the logic you are trying to criticize or attack.

Drawing from Winner’s insight the question emerges, what the embedded, structural politics of “AI” are? What world, what view of the world, what politics does “AI” require or imply? What’s the path that “AI” as we understand it today put us on?

Before we dive into this I want to quickly talk about the definition of the term “AI”. I do not think that “AI” is a very useful term – TBH I would mostly advise against using it in general because it clouds more than it explains or makes clear. But still the term is everywhere so we have to deal with it. And one important realization about “AI” is that it’s not a very well-defined term: “AI” can be an LLM (a stochastic token extruder), a system of symbolic knowledge representation, an Excel macro, a person in a call center in India or just a slide in a pitch deck. “AI” doesn’t mean anything specific. At least not a specific type or class of technical artifacts.

I am a big fan of Ali Alkhatib’s definition of AI:

I think we should shed the idea that AI is a technological artifact with political features and recognize it as a political artifact through and through. AI is an ideological project to shift authority and autonomy away from individuals, towards centralized structures of power. Projects that claim to “democratize” AI routinely conflate “democratization” with “commodification”.

Ali Alkhatib

“AI” is a political project – I have also sometimes called it a narrative – whose purpose is the shifting of power and agency away from people and organizations towards centralized power structures. These centralized power structures are currently mostly a handful of big tech corporations and the “AI Labs” they keep shoveling money into.

So while I don’t think that “AI” is a great term to use, we will keep using it for the rest of this text in the understanding that dominates the term right now: In that reading “AI” stands for a class of stochastic machine learning systems that can store and apply patterns extracted from data in order to do either pattern recognition (think computer vision) or (and that is the dominant narrative vehicle today) as generative systems (“generative AI” or “genAI”). So when I write “AI” think ChatGPT or Claude or Gemini or Deepseek, etc.

So, back to fascism.

There is of course a huge load of research and analysis from media studies and related fields about the fascist use of “AI”: I specifically recommend Gareth Watkins’ essay “AI: The New Aesthetics of Fascism“. In it, Watkins shows that there are properties in the structure of the output from generative image extruders that align well with the politics and reasoning of the right.

“AI” is built by scraping the Internet and any other data source one can find and most of that data is heavily racialized, is based on a colonial, sexist, heteronormative understanding of the world and the past. There literally is no police data that’s not racist. If you base your image generator on the images available, LGBTQIA representation, representation of people not conforming to the social expectations of acceptability is lackluster at best for example.

And all that data does by definition exclude: “AI” is not built on “all of humankind’s knowledge” but based on whatever a mostly western view of the world and what is relevant looks like. Cultures who are not within that framework, who might even be based on more oral forms of keeping history and knowledge are not represented. Even if those groups are not actively excluded (which again they very often are) there are huge populations who just are not seen by the data and do not get a say in how they are represented. Or if they are represented it’s just as problems: Think about unsheltered people for example.

The right loves those patterns because they confirm their prejudices: Ask an image generator for a picture of two people kissing and you most often get a heterosexual couple, often white. Because that’s what the training data looks like. That makes “AI” perfect for creating the form of idealized, fictional “past” that fascists love to allude to (“make America great again“), a past that never existed but that needs to be saved or restored (we’ll get back to that later).

But there is another aspect of “AI” usage that fuels the right’s enjoyment of using “AI”: It hurts the people they want to hurt. “AI” is currently mostly used to generate media (think images, illustrations, music or text). But traditionally people in those creative industries are more left leaning, more inclusive. Fascists just can’t create good and interesting art. Using “AI” to take that groups’ jobs, their livelihood, their creative expression away is exactly why using “AI” to create an image is so enjoyable by right wingers: It’s a vulgar display of power.

And this perfectly leads us to looking at the structural properties of “AI”. Because while a lot of the usage feels like it might align more with right wingers, there are even deeper fascist tendencies within “AI”.

Modern “AI” systems of any relevant capability exist because of violence, are based on violence.

Violence is more than just hitting people. Taking away people’s agency is violence, exposing people to suffering is violence. Violence has many shapes and forms. And “AI” needs an acceptance of endless amounts of violence (I will not be able to list all forms of it, this is just a selection).

In order to train “AI” systems you need data. Lots of it. And then some more. That’s really hard to get legally, especially when a large part of the population does not want their creative works or the data about them to be fed into those machines. The first form of violence “AI” depends on is the violence of data acquisition: “AI” depends on scraping and accumulating all you can get – including taking against people’s explicit will and without their consent. I run iocaine on my server and it’s absolutely crazy to see how many AI scrapers do ignore my stated preferences and just try to take all I have ever written to train their systems. And I am not alone of course. “AI” Labs keep downloading unlicensed works like books, keep digitizing anything they can find in order to feed their data hunger. They know it’s illegal and unethical but that’s irrelevant. “AI” systems exist because of the belief that “if you can download it, you can use it”. It’s the belief that might makes right.

The second form of violence happens during data labeling and cleaning. Workers mostly in the global south have to spend their days looking at the worst shit you can imagine to try to keep torture content or depictions of sexualized violence against people out of the training data. This is not only economic exploitation (they get paid very little for that mentally and psychologically immensely hard job) but also a violence done to their psyche and mental health. Because we are too lazy to look for stock photos some mother in Kenya can no longer sleep due to nightmares.

The third violence we already alluded to: It’s the colonialist, western view to declare whatever the west thought was “worth” digitizing or whatever submits to that logic “all the world’s knowledge”. This is not just lack of representation but a forceful othering of large parts of the population of this planet. If you are not useful to western, capitalist readings of the world then you, your history, your experiences are not part of “all the world’s knowledge”. You are being declared a lesser human being.

The fourth violence is the violence done against marginalized groups using “AI” tools that we are just demanded to accept. This starts with the Trump administration creating racist propaganda using “AI” but it doesn’t end there. The amount of abuse and sexual violence that especially women are constantly being exposed to is staggering. “Nudify” apps, “grok, put her into a bikini” are just the most obvious of those tools. It’s not that most “AI” labs explicitly support those kinds of usage but they are also not limiting usage: It’s just “bad guys” or “usage violating our terms of service” but that’s just a legal defense not taking responsibility for enabling that kind of abuse.

Dan McQuillan, who probably made the connection between fascism and “AI” first in his book “Resisting AI“, also showed how “AI” as an organizing principle leads to what he calls “Necropolitics”:

The enrolment of AI in the management of these various crises produces ‘states of exception’ – forms of exclusion that render people vulnerable in an absolute sense. The multiplication of algorithmic states of exception across carceral, social and healthcare systems makes visible the necropolitics of AI; that is, its role in deciding who should live and who should be allowed to die.

Dan McQuillan

Necropolitics in a way is the final form of the violence that deploying “AI” applies to the world: To make the decision of who gets to live. Not just in the Palantir “we kill our enemies” kind of way but also as a method to take “unworthy”‘s people’s access to healthcare and other systems of support.

Using “AI” requires normalizing these forms of violence. You need to accept them in order to be able to live with yourself integrating those tools into your workflows.

And this is the connection to fascism. Because one of the core patterns of fascism is the massive normalization of violence. Of establishing violence and dominance as the organizing principle for society. Mostly against “the enemy” (hello Palantir) but also as a form of establishing and maintaining social and political hierarchies.

“AI” shares this structural normalization of violence as a principle with fascism.

I am a big believer in Stafford Beer’s principle that “the purpose of a system is what it does”, that when evaluating systems one needs to look at the actual effects that system has on the world and not its manual or the sales pitch. From that we can pretty easily determine the short-term purpose of “AI”: The destruction of labor power.

This dismantling happens on multiple levels by attacking the foundation of what allows those forms of organization to take place.

The first level is very individualistic: By pointing at “the AI” that can replace a worker that worker is pressured into working harder, not asking for raises or any other improvements of their working conditions. Even though “AI” cannot do your job, the threat itself is useful to employers to undermine your individual power, your feeling of being valuable as a worker.

The second level is about attacking the idea of solidarity and connection: Because “AI” will not replace you (again, “AI” cannot replace the absolute majority of workers!) “but someone using AI will”. This sets up kind of a Thunderdome in which we all have to fight against each other for scraps/jobs. This framing implies that you should not unionize and connect with your fellow workers but that you should see them as your enemies, as the people who will take your job and your ability to provide for your family. We know this dynamic, it’s exactly how the right presents migration as “attack”. It also normalizes violence again turning all of existence into an endless fight against one another (unless you are one of the few people in power of course).

The third level is somewhat more devious. Because it makes us do that form of dissolving of social bonds ourselves. An example: If I use an “AI” to generate an illustration instead of asking a designer I am saying that while my skills and labor has value, that of the designer has not. This implicitly cuts my ability to form connections of solidarity with designers whose work and livelihood I have implicitly declared irrelevant. It makes me put myself over my fellow workers, workers who are facing the same struggles as me, who are my comrades. But no more.

And here again “AI” shares a core idea of fascism: Both are based on the destruction of solidarity and labor power in order to cement the totalitarian control about the centers and sources of power. (This is also a pretty direct connection to Ali Alkhatib’s definition of “AI”.)

The dis-/misinformation discourse has been core to the current crop of “AI” systems since their popularization through OpenAI’s ChatGPT. “AI” systems make it trivial to create all sorts of manipulated pieces of media from modifying existing media to fully generating completely new pieces of media from basically nothing.

This leads to us losing trust in each other (“I don’t believe that, that’s AI”) but also the (infra)structures we have established to reliably produce, verify and spread trustworthy truths: Journalism, Universities and other research institutions as well as our own minds.

“AI” is presented as the ultimate answering machine (or as Karen Hao calls them: “Everything Machines”) and through that logic separates us from any reliable connection to what is in the world: Where journalists and scientists try to create strong links to verifiable real-world phenomena and knowledge about them, “AI” creates something from nothing severing any link that might allow fact-checking and validation.

This again increases our dependency on these systems for making sense because they only “work” by us fully committing to them: Stochastic token extruders do not allow you to follow, trace, analyze and explain any form of reasoning or deduction in order to show weaknesses or inconsistencies: The answer appears from nothing and you can believe if you want. We are replacing trustworthiness with belief.

And who controls the magic belief machine? In 2025 the New York times showed just how aggressively Elon Musk reshapes Grok’s output based on his fascist, Nazi beliefs. (Elon Musk showed the Hitler salute in the context of Trump’s inauguration. I think it’s 100% justified to call him a Nazi.)

“AI” is being shoved into the scientific process (for review as well as the writing of scientific books and papers) as well as education. In spite of studies upon studies showing that using “AI” degrades our cognitive capabilities especially when it comes to critical thinking and problem solving. And this is not subtext. A few weeks ago Sam Altman presented his vision of the Future where “Intelligence” is something you rent from OpenAI: Intelligence and the ability to make sense is actively being taken from you not just to make money but to control your ability to criticize structures of power. This is the definition of epistemic injustice.

In that reading „AI“ is a machine for the creation of epistemic injustice and the replacement of truth with what a tech elite wants it to be in order to control the population. This is a Fascist project that not so subtly aligns with Fascism’s totalitarian will to power and control as well as its reliance in replacing reasoning and debate with belief in power and the leader.

“AI” is the future. No, maybe I should write the FUTURE. The ONLY FUTURE.

Our participation in the introduction of this class of stochastic systems into the hearts of our central political, social and cognitive infrastructures is limited to debating a bit about the how of “AI”, about the “ethics” and maybe “best practices“. About creating narratives to legitimize the introduction and cushion the narrative of the inevitability of “AI”.

But we don’t get to say if at all. “No” is not an option. We don’t get to say that these systems do not in fact produce enough social or even economic benefit to legitimize their energy and water usage, the amount of e-waste they are responsible for or the harms they do to the data labelers, the job market or our common communicative landscape. These systems are forced on us with every little app on your phone and every website demanding that you waste your precious time on this planet talking to their chatbot. People in power, people with money – most of them men – get to make the decisions. Regardless of what you want. And that is not the only negative impact on democracy. The other attack is fiercer.

“AI” is being introduced increasingly into government processes: “AI” is promised to bring more efficiency into the administration, is supposed to “reduce bureaucracy”. But bureaucracy is not just an annoyance but one of the central tools that democratic societies have established to realize the core idea of democracy: Transparency in the application of power in order to be able to control said power. Democracy is not just about voting but about ensuring that all power – especially by the state – is used in accordance with the law and in a fair way. Stochastic “AI” systems break that promise. The “AI” just says that you do not get the support you need. No idea why, might be a bug or a deeply racist training data set or something else. Nobody knows. Now it is on you to prove that you are in the right, it is on you to fight for your right because the processes that were supposed to protect your rights are hollowed out in order to make them faster: We are forcing marginalized, disenfranchised people to fight against a black box trained on the data that already contains their disenfranchisement. We’re supposed to live in a world where the computer just gets to say “No”. The computer built, configured and run by a few powerful men.

This is more than the imbalance of power that capitalism usually produces. This is about dismantling central democratic infrastructures that allow the public to keep power in check.

“AI” as a bulldozer against bureaucracy is a wrecking ball for democratic principles. A deeply fascist endeavor.

“AI” is not presented or talked about like a “normal technology”. “AI” does not have to provide clear benefits and tangible results (studies upon studies do for example show that the promised efficiency gains of “AI” are not real but that does not matter). Because “AI” is the future. “AI” is supposed to solve all our problems. The climate crisis? We don’t have to stop driving SUVs: “AI” will figure it out.

Not only VCs like Marc Andreessen present “AI” as the one core building block of our collective future. Even liberal thinkers talk about “AI” somehow creating “abundance” or some other narrative that is in no way, shape or form connected to the actual reality that we are living in. “AI” is build on hopes and dreams and if it’s not working it’s just because we’re not doing enough of it or because we are not believing hard enough.

“AI” is a religious narrative. A story about a form of technologically generated paradise that “we” need to protect from (quoting Marc Andreessen): The Luddites, the communists and those wanting to regulate tech.

In this narrative “AI” connects perfectly to the fascist rhetoric of the “glorious past” that needs to be restored. Where in traditional fascism an invented past that was ruined by democracy and human rights and Marxism, etc. needs to be restored in this reading the religious narrative of the “Singularity” or “AGI” serves exactly the same purpose: It creates a narrative of glory and salvation whose (re)creation is the ultimate, all-overriding goal.

Or as the Nazi Elon Musk phrases it:

You could sort of think of humanity as a biological boot loader for digital super intelligence.

Elon Musk (Nazi)

It’s not just the pseudo-religious rambling, it’s also the disregard for the dignity of human lives. That’s how fascists think: Turning people into means, into objects that have to serve a purpose or need to be destroyed.

This way of thinking excludes each and every one of us from participating in the collective social process of thinking about our needs and wishes, about how we want the future to look like. We are no longer part of that conversation.

The singularity is “MAGA” for nerds. A fascist narrative used to undermine everyone’s right and ability to envision futures.

“AI” as we use the term today is build on fascist ideas. Is structurally supportive of fascist thinking.

That does not mean that every user of “AI” products is therefore a fascist. Some “AI”-users might even consider themselves antifascists. But by using these systems you are integrating inherently fascist logic into your thinking, into your mental apparatus. These systems force you to at least accept the fascist tendencies within “AI” systems as “normal” or “okay”.

And you might think that your individual usage cleanses the “AI”. That you generating an illustration is somehow not accepting the logic and violence of might making right, is somehow not accepting the suffering in the global south, is somehow not accepting the undermining of trust in our society. That somehow you are not structuring the world into people whose rights and demands and needs should be met and others whose are irrelevant.

But you are moving your thinking in that direction, are creating permission structures for inhumane reasoning.

And that leads me to wondering why anyone would want to try to save those technologies and tools. When we are just trying to keep systems around that try to dismantle our humanity, our connection to each other and the democracies we have built.

In our current conversations around technologies “AI” is absolutely dominant. It keeps sucking all the air out of the room for talking about anything else. That is why I wrote this thing about “AI”. But don’t be mislead: Our world is full of fascist technologies. The blockchain crowd is basically living in a fascist soup of inhumane and antidemocratic ideas. A lot of surveillance tech is no better than “AI”. We are having a general problem here.

Because not all technologies surrounding us and structuring our lives are fascist, none are explicitly antifascist. None reject those ideas nor are they built to reject those ideas and ideologies.

Antifascism is not a radical stance, not every antifascist has to be an anarchist wearing all black all day. You believe in democracy? You are an Antifascist. You believe in human rights? Welcome to the fight comrade.

Some people look towards “Open Source” as our salvation but while I love Open Source and consider the amount and quality of open source infrastructure available to all of us a modern wonder of the world more impressive than the pyramids that crowd also doesn’t want to be “political”. The accepted open source licenses all are built around libertarian thinking, about empowering the individual who does not want to be regulated and limited in their individual expression: Not around the idea of expressing and manifesting political values. Open source licenses do not allow you to forbid using your work in weapons. Do not allow you to limit using your work only for socially beneficial endeavors. Open source is impressive, but tries to be apolitical and therefore does not help us fight back fascism. It wants to stay neutral. But there is no neutrality when standing in a wildfire.

We have to refuse fascism. Have to remove fascist thinking from our hearts and minds. That is our way towards a convivial, humane world. Our path to Utopia. Or better, Utopias.

And to achieve that we need to fix the tech that is increasingly structuring large parts of our lives. Need to build antifascist technologies and social structures around the creation of those technologies.

Because if we don’t, we will suffer the consequences. And history has shown how those look when we let fascist gain power.

I want to end this piece with a quote from Brian Merchant’s fantastic book “Blood in the Machine”.

Some machines must be broken, so that they stop producing monsters.

Brian Merchant

The monster in this case being fascism. So there’s only one thing left to say in summary:

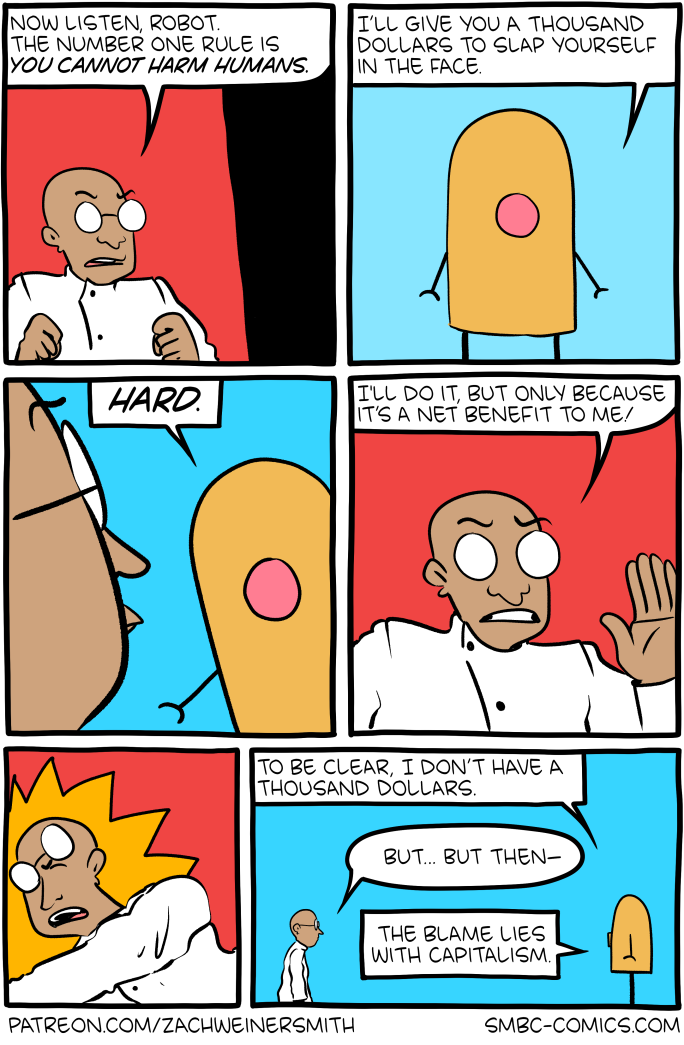

Click here to go see the bonus panel!

Hovertext:

Later it works out because the man is a masochist.

Today's News: